Author

Updated

15 Jun 2023Form Number

LP0855PDF size

18 pages, 630 KBAbstract

The ThinkSystem QLogic QL45214, QL45212 and QL45262 network adapters for Lenovo Flex System are based on eighth-generation Ethernet technology from Cavium and feature Universal Remote Direct Memory Access (RDMA) to offer concurrent support for RoCE, RoCE v2, and iWARP. The adapters are for customers looking for end-to-end 50 Gb and 25 Gb Ethernet speeds in their Flex System environment as well as those who want to maintain their existing 10Gb networking infrastructure while preparing for future upgrades to 25Gb or 50 Gb network speeds.

This product guide provides essential presales information to understand the QLogic 25/50 GbE Flex adapters and their key features, specifications, and compatibility. This guide is intended for technical specialists, sales specialists, sales engineers, IT architects, and other IT professionals who want to learn more about the adapters and consider their use in a Flex System solution.

Change History

Changes in the June 15, 2023 update:

- The following adapter is withdrawn:

- ThinkSystem QLogic QL45214 Flex 25Gb 4-Port Ethernet Adapter, 7XC7A05844

Walkthrough Video with Jim Whitten and David Watts

Introduction

The ThinkSystem QLogic QL45214, QL45212 and QL45262 network adapters for Lenovo Flex System are based on eighth-generation Ethernet technology from Cavium and feature Universal Remote Direct Memory Access (RDMA) to offer concurrent support for RoCE, RoCE v2, and iWARP. The adapters are for customers looking for end-to-end 50 Gb and 25 Gb Ethernet speeds in their Flex System environment as well as those who want to maintain their existing 10Gb networking infrastructure while preparing for future upgrades to 25Gb or 50 Gb network speeds.

The adapters are shown in the following figure.

Figure 1. QLogic QL45000 25Gb and 50Gb Flex Ethernet Adapters (QL45214, QL45212 and QL45262)

Did you know?

These QLogic adapters enable either 10/25GbE or 10/25/50GbE speeds for greater deployment flexibility. They also support switch-independent NIC partitioning, and the Universal RDMA feature means that the adapters support RoCE, RoCEv2, and iWARP, even concurrently, allowing you to seamlessly migrate from one technology to the other.

Part number information

The part numbers to order the adapters are listed in the following table.

| Part number | Feature | Description |

|---|---|---|

| 25 Gb Ethernet | ||

| 7XC7A05844 | B2VU | ThinkSystem QLogic QL45214 Flex 25Gb 4-Port Ethernet Adapter |

| 50 Gb Ethernet | ||

| 7XC7A05845 | B2VV | ThinkSystem QLogic QL45262 Flex 50Gb 2-Port Ethernet Adapter with iSCSI/FCoE |

| 7XC7A05843 | B2VT | ThinkSystem QLogic QL45212 Flex 50Gb 2-Port Ethernet Adapter |

Features

The QLogic 25 and 50 Gb Ethernet adapters for Flex System have the following key features:

Support for new 25/50 GbE and existing 10 GbE Flex networking

The QLogic 25 and 50 Gb Ethernet adapters are ideally paired with the new ThinkSystem NE2552E 25/50/100Gb Flex Switch for the ultimate in networking performance, however the adapters are also supported with most of the existing Flex System 10Gb I/O modules. This means you can buy new ThinkSystem compute nodes already enabled for high-speed networking and ready for when you upgrade the embedded switches in your Flex System Enterprise Chassis.

Cost effective single-lane connection

The 25 Gbps Ethernet specification enables network bandwidth to be cost-effectively scaled in support of next-generation server and storage solutions residing in cloud and Web-scale data center environments. 25 and 50GbE results in a single-lane connection similar to existing 10GbE technology—but delivers 2.5 to 5 times greater bandwidth. Compared to 40GbE solutions, 25GbE technology provides superior switch port density by requiring just a single lane (versus four lanes with 40GbE), along with lower costs and power requirements. Cavium is a leading innovator driving 25 and 50 GbE technologies across enterprise and cloud market segments.

NIC partitioning and SR-IOV to maximize network utilization

The QLogic adapters support cutting-edge server virtualization technologies—single-root I/O virtualization (SR-IOV) and virtual NIC capabilities such as switch independent NIC partitioning (also known as NPAR or vNIC2) and Unified Fabric Port. With NIC partitioning, each of the physical ports on the adapter can be logically divided into four or eight virtual NIC (vNIC) functions with user-definable bandwidth settings. With SR-IOV, each virtual port can be assigned to an individual VM directly by bypassing the virtual switch in the Hypervisor, which results in near-native performance.

High performance Universal RDMA Offload

The QLogic 25 and 50 GbE adapters support RoCE and iWARP acceleration to deliver low latency, low CPU utilization, and high performance on iSER and Windows Server Message Block (SMB) Direct 3.0 / 3.02. The adapters have the unique capability to deliver Universal RDMA to enable RoCE, RoCEv2, and iWARP. Cavium Universal RDMA and emerging low latency I/O bus mechanisms such as Network File System over RDMA (NFSoRDMA) and Non-Volatile Memory Express (NVMe) allow customers to accelerate access to data. Cavium’s cutting-edge offloading technology increases cluster efficiency and scalability to many thousands of nodes.

High density server virtualization

The latest hypervisors and multicore systems use several technologies to increase the scale of virtualization. The QLogic 25 and 50 GbE adapters support:

- VMware NetQueue

- Windows Hyper-V Virtual Machine Queue (VMQ)

- Linux Multiqueue

- Windows, Linux, and VMware switch-independent NIC partitioning (NPAR)

- Windows Hyper-V, Linux Kernel-based Virtual Machine (KVM), and VMware ESXi SR-IOV

These features provide ultimate flexibility, quality of service (QoS), and optimized host and virtual machine (VM) performance while providing full 25 and 50 Gbps bandwidth per port. Public and private cloud virtualized server farms can now achieve 2.5 to 5 times the VM density for the best price and VM ratio.

Wire-speed network virtualization

Enterprise-class data centers can be scaled using overlay networks to carry VM traffic over a logical tunnel using NVGRE, GRE, VXLAN, and GENEVE. Although overlay networks can resolve virtual Local Area Network (VLAN) limitations, native stateless offloading engines are bypassed, which places a higher load on the system’s CPU. The QLogic 25 and 50 GbE adapters efficiently handle this load with advanced NVGRE, GRE, VXLAN, and GENEVE stateless offload engines that access the overlay protocol headers. This access enables traditional stateless offloads of encapsulated traffic with native-level performance in the network. Additionally, the adapters support VMware NSX and Open vSwitch (OVS).

Hyperscale Orchestration With OpenStack

The QLogic 25 and 50 GbE adapters support the OpenStack open source infrastructure for constructing and supervising public, private, and hybrid cloud computing platforms. It provides for both networking and storage services (block, file, and object) for iSER. These platforms allow providers to rapidly and horizontally scale VMs over their entire, diverse, and widely spread network architecture to meet the real-time needs of their customers. Cavium’s integrated, multiprotocol management utility, QConvergeConsole (QCC), provides breakthrough features that allow customers to visualize the OpenStack-orchestrated data center using auto-discovery technology.

Accelerate NFV workloads

In addition to OpenStack, the QLogic 25 and 50 GbE adapters support Network Function Virtualization (NFV) that allows decoupling of network functions and services from dedicated hardware (such as routers, firewalls, and load balancers) into hosted VMs. NFV enables network administrators to flexibly create network functions and services as they need them, reducing capital expenditure and operating expenses, and enhancing business and network services agility. Cavium 25 and 50GbE technology is integrated into the Data Plane Development Kit (DPDK) and can deliver up to 60 million packets per second to host the most demanding NFV workloads.

Specifications

The QLogic 25 Gb and 50 Gb adapters have the following technical specifications:

- Cavium FastLinQ 45000 ASIC

- PCIe 3.0 x16 host interface (standard Flex System compute node I/O adapter connection)

- Two internal 50GBase-KR SERDES interfaces (each interface is 2 lanes of 25 Gb/s) to the chassis midplane

- QL45214: Four 25Gb connections

- QL45212 and QL45262: Two 50 Gb connections (each connection is two 25 Gb lanes combined)

- All connections are internal to the Flex chassis; no transceivers or cables are required

- Supports Message Signal Interrupt (MSI-X)

- Support for PXE boot, iSCSI boot and Wake-on-LAN (WOL)

- Networking Features

- Jumbo frames (up to 9600-Byte)

- 802.3x flow control

- Link Aggregation (IEEE 802.1AX-2008)

- Virtual LANs-802.1q VLAN tagging

- Configurable Flow Acceleration

- Congestion Avoidance

- IEEE 1588 and Time Sync

- Forward Error Correction Clause 74, support for 25 Gbps and 50 Gbps

- Performance

- Data Plane Development Kit (DPDK) support

- Maximum 60 Million packets per second

- Low latency

- 50Gbps line rate per-port in 50GbE mode (50 GbE adapter)

- 25Gbps line rate per-port in 25GbE mode

- 10Gbps line rate per-port in 10GbE mode

- Stateless Offload Features

- IP, TCP, and user datagram protocol (UDP) checksum offloads

- TCP segmentation offload (TSO)

- Large send offload (LSO)

- Giant send offload (GSO)

- Large receive offload (LRO) (Linux)

- Receive segment coalescing (RSC) (Windows)

- Receive side scaling (RSS)

- Transmit side scaling (TSS)

- Interrupt coalescing

- Virtualization

- VMware NetQueue support

- Microsoft Hyper-V VMQ support (up to 208 dynamic queues)

- Linux Multiqueue support

- PCI SIG SR-IOV compliant with support for 192 Virtual Functions

- Virtual NIC (vNIC) / Network Partitioning (NPAR) with support for up to 16 physical functions

- 2-port adapters support 8 vNICs per port

- 4-port adapter supports 4 vNICs per port

- Unified Fabric Protocol (UFP) (16 physical functions)

- VXLAN-aware stateless offloads

- NVGRE-aware stateless offloads

- Geneve-aware stateless offloads

- IP-in-IP-aware stateless offloads

- GRE-aware stateless offloads

- Stateless Transport Tunneling

- Edge Virtual Bridging (EVB)

- Per Virtual Function (VF) statistics

- VF Receive-Side Scaling (RSS)/Transmit-Side Scaling (TSS)

- RDMA over Converged Ethernet (RoCE)

- RoCEv1

- RoCEv2

- iSCSI Extensions for RDMA (iSER)

- Internet Wide Area RDMA Protocol (iWARP)

- Storage over RDMA: iSER, SMB Direct, and NVMe over Fabrics

- NFSoRDMA

- Tunneling Offloads:

- Virtual Extensible LAN (VXLAN)

- Generic Network Virtualization Encapsulation (GENEVE)

- Network Virtualization using Generic Routing Encapsulation (NVGRE)

- Linux Generic Routing Encapsulation (GRE)

- Data Center Bridging (DCB)

- Priority-based flow control (PFC; IEEE 802.1Qbb)

- Enhanced transmission selection (ETS; IEEE 802.1Qaz)

- Quantized Congestion Notification (QCN; IEEE 802.1Qau)

- Data Center Bridging Capability eXchange (DCBX; IEEE 802.1Qaz)

- Storage offloads (QL45262 adapter only)

- FCoE Hardware Offload

- iSCSI Hardware Offload

- Manageability

- QLogic Control Suite integrated network adapter management utility (CLI) for Linux and Windows

- QConvergeConsole integrated network management utility (GUI) for Linux and Windows

- QConvergeConsole Plug-ins for vSphere (GUI) and ESXCLI plug-in for VMware

- QConvergeConsole PowerKit (Windows PowerShell) cmdlets for Linux and Windows

- UEFI-based device configuration pages

- Native OS management tools for networking

- Full support for XClarity Administrator, Lenovo OneCLI, ASU, and firmware updates

- SNIA HBA API v2 and SMI-S APIs

- Support for PLDM for Redfish Device Enablement standard (DMTF DSP0218)

- Support for MCTP for increased security when using PCIe VDM (DMTF DSP0238)

- Power Saving

- ACPI compliant power management

- PCI Express eCLKREQ support

- PCI Express unused lane powered down

- Ultra low-power mode

- Power Management (PM) Offload

IEEE Standards

The adapter supports these IEEE specifications:

- IEEE 802.1AS (Precise Synchronization)

- IEEE 802.1ax-2008 (Link Aggregation) (IEEE 802.3ad)

- IEEE 802.1q (VLAN)

- IEEE 802.1Qaz (DCBX and ETS)

- IEEE 802.1Qbb (Priority-based Flow Control)

- IEEE 802.3-2015 (10Gb and 25Gb Ethernet flow Control)

- IEEE 802.3by-2016 (25G Ethernet)

- IEEE 1588-2002 PTPv1 (Precision Time Protocol)

- IEEE 1588-2008 PTPv2

The adapter supports these additional specifications:

- IPv4 (RFQ 791)

- IPv6 (RFC 2460)

Server support

The following table lists the compute nodes that support the adapters.

| Part number |

Description |

x240 (8737, E5-2600 v2)

|

x240 (7162)

|

x240 M5 (9532, E5-2600 v3)

|

x240 M5 (9532, E5-2600 v4)

|

x440 (7167)

|

x880/x480/x280 X6 (7903)

|

x280/x480/x880 X6 (7196)

|

SN550 (7X16)

|

SN850 (7X15)

|

SN550 V2

|

|---|---|---|---|---|---|---|---|---|---|---|---|

| 7XC7A05844 | ThinkSystem QLogic QL45214 Flex 25Gb 4-Port Ethernet Adapter | N | N | N | N | N | N | N | Y | Y | Y |

| 7XC7A05845 | ThinkSystem QLogic QL45262 Flex 50Gb 2-Port Ethernet Adapter with iSCSI/FCoE | N | N | N | N | N | N | N | Y | Y | Y |

| 7XC7A05843 | ThinkSystem QLogic QL45212 Flex 50Gb 2-Port Ethernet Adapter | N | N | N | N | N | N | N | Y | Y | Y |

I/O adapter cards are installed in the slot in supported servers, such as the SN550, as highlighted in the following figure.

.png)

Figure 2. Location of the I/O adapter slots in the ThinkSystem SN550 server

Internal connectivity

The QL45000 adapters do not use transceivers or cables for connectivity to switches. Instead, the adapters connect to the Flex System switches and I/O modules installed in the chassis via internal connections.

The following table shows the connections between adapters installed in the compute nodes and the switch bays in the chassis.

| I/O adapter slot in the compute node |

Port on the adapter | Corresponding I/O module bay in the chassis | |||

|---|---|---|---|---|---|

| Bay 1 | Bay 2 | Bay 3 | Bay 4 | ||

| Slot 1 | Port 1 | Yes | |||

| Port 2 | Yes | ||||

| Port 3 (QL45214 only) | Yes | ||||

| Port 4 (QL45214 only) | Yes | ||||

| Slot 2 | Port 1 | Yes | |||

| Port 2 | Yes | ||||

| Port 3 (QL45214 only) | Yes | ||||

| Port 4 (QL45214 only) | Yes | ||||

| Slot 3 (full-wide compute nodes only) |

Port 1 | Yes | |||

| Port 2 | Yes | ||||

| Port 3 (QL45214 only) | Yes | ||||

| Port 4 (QL45214 only) | Yes | ||||

| Slot 4 (full-wide compute nodes only) |

Port 1 | Yes | |||

| Port 2 | Yes | ||||

| Port 3 (QL45214 only) | Yes | ||||

| Port 4 (QL45214 only) | Yes | ||||

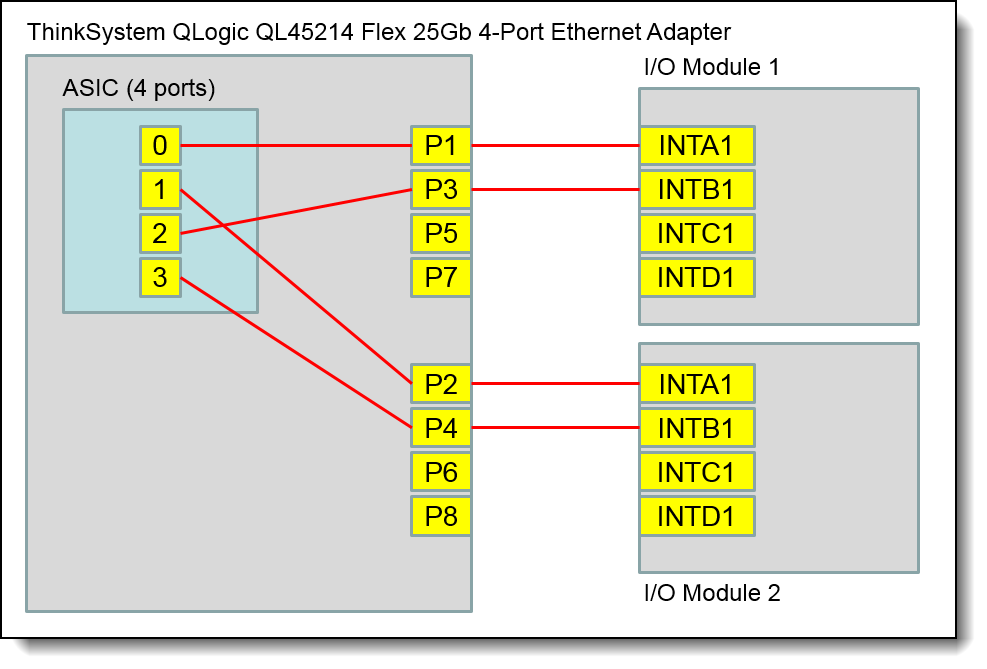

The following figure shows the internal layout of the 4-port 25Gb QL45214 adapter, with how the adapter ports are routed to the I/O module internal ports.

Figure 3. Internal layout of the QL45214 adapter ports

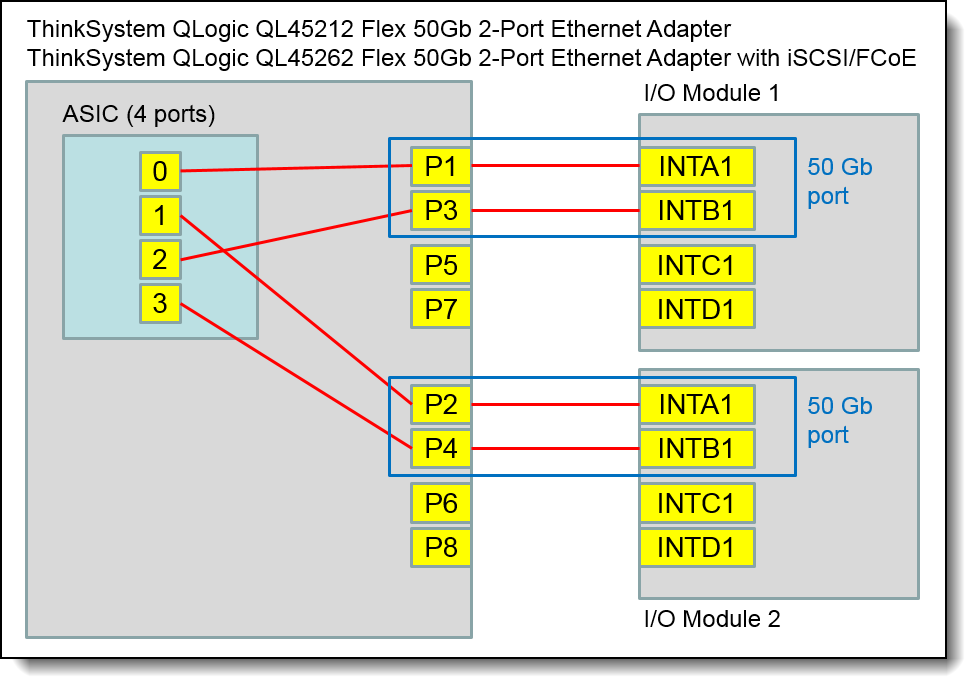

The following figure shows the internal layout of the 2-port 50 Gb QL45212 and QL45262 adapters, and how the adapter ports are routed to the I/O module internal ports. As shown, two 25 Gb ports are combined to form a 50 Gb connection.

Figure 4. Internal layout of the QL45212 and QL45262 adapter ports

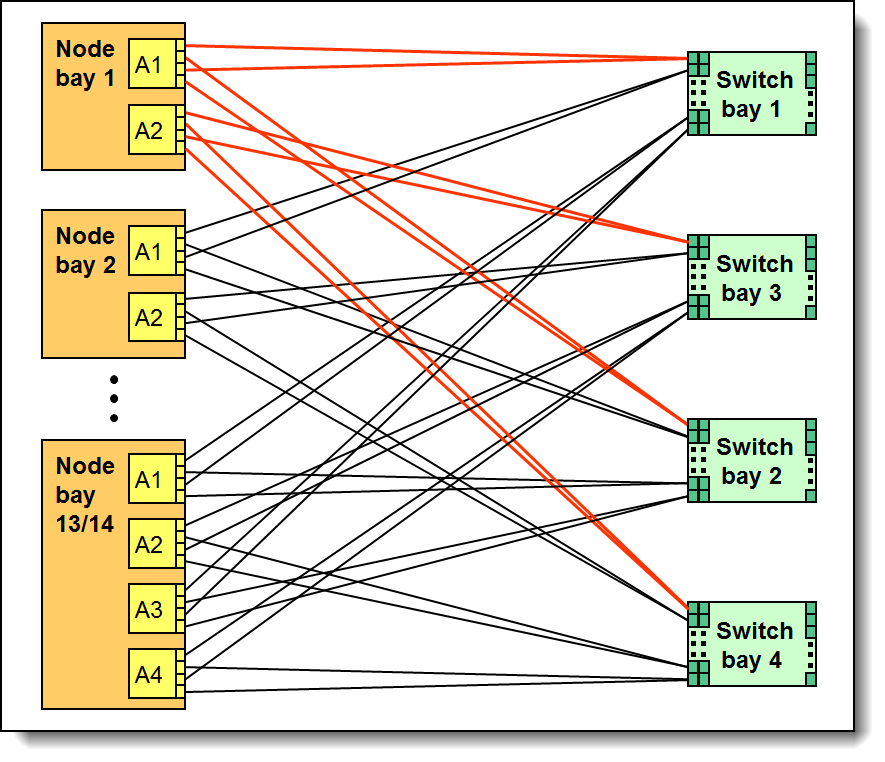

The connections between the adapters installed in the compute nodes to the switch bays in the chassis are shown diagrammatically in the following figure. The figure shows half-wide servers (such as the SN550 with two adapters) and full-wide servers (such as the SN850 with four adapters).

Figure 5. Logical layout of the interconnects between I/O adapters and I/O modules

I/O module support

These adapters can be installed in any I/O adapter slot of a supported Flex System compute node. One or two compatible modules must be installed in the corresponding I/O bays in the chassis. When connected to the 10 Gb switch, the adapter will operate at 10 Gb speeds.

To maximize the number of adapter ports usable, you may also need to order switch upgrades to enable additional ports. Alternatively, for CN4093, EN4093R, and SI4093 switches, you can use Flexible Port Mapping, a feature of Networking OS 7.8 or later, that allows you to minimize the number of upgrades needed.

See the Product Guides for the Flex System switches for more details about switch upgrades and Flexible Port Mapping:

https://lenovopress.com/servers/blades/networkmodule

The table below specifies how many ports the adapters contain. For the CN4054S, to enable all four adapter ports, either upgrade the switch or use Flexible Port Mapping. Switches should be installed in pairs to maximize the number of ports enabled and to provide redundant network connections.

| Number of ports† | ||||

|---|---|---|---|---|

| Part number |

Description | QL45214 25Gb |

QL45212 QL45262 50Gb |

|

| Supported 25 Gb and 50 Gb Ethernet | ||||

| 4SG7A08868 | Lenovo ThinkSystem NE2552E Flex Switch | 4 | 2 | |

| Supported 10 Gb Ethernet | ||||

| 00FM514 | Lenovo Flex System Fabric EN4093R 10Gb Scalable Switch | 4** | 2 | |

| 00FM510* | Lenovo Flex System Fabric CN4093 10Gb Converged Scalable Switch | 4** | 2 | |

| 00FM518 | Lenovo Flex System Fabric SI4093 System Interconnect Module | 4** | 2 | |

| 00FE327 | Lenovo Flex System SI4091 10Gb System Interconnect Module | 2 | 2 | |

| 00D5823* | Flex System Fabric CN4093 10Gb Converged Scalable Switch | 4** | 2 | |

| 95Y3309* | Flex System Fabric EN4093R 10Gb Scalable Switch | 4** | 2 | |

| 95Y3313* | Flex System Fabric SI4093 System Interconnect Module | 4** | 2 | |

| Not supported | ||||

| 90Y9346* | Flex System EN6131 40Gb Ethernet Switch | - | - | |

| 88Y6043 | Flex System EN4091 10Gb Ethernet Pass-thru | - | - | |

| 49Y4294* | Flex System EN2092 1Gb Ethernet Scalable Switch | - | - | |

| 94Y5350* | Cisco Nexus B22 Fabric Extender for Flex System | - | - | |

| 94Y5212* | Flex System EN4023 10Gb Scalable Switch | - | - | |

| 49Y4270* | Flex System Fabric EN4093 10Gb Scalable Switch | - | - | |

* Withdrawn from marketing

† This is the number of adapter ports that will be enabled per adapter, and requires that two switches be installed in the chassis.

** The use of 4 ports will require either a switch upgrade to enable additional ports or the use of Flexible Port Mapping to reconfigure the active ports

Operating system support

The following tables list the operating system support:

- ThinkSystem QLogic QL45214 Flex 25Gb 4-port Ethernet Adapter, 7XC7A05844

- ThinkSystem QLogic QL45262 Flex 50Gb 2-port Ethernet Adapter with iSCSI/FCoE, 7XC7A05845

- ThinkSystem QLogic QL45212 Flex 50Gb 2-port Ethernet Adapter, 7XC7A05843

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

|---|---|---|---|---|---|

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.8 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.9 | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y 1 | Y 1 | Y 1 | Y 1 |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y |

1 Do not qualify HW iSCSI and FCoE feature, due to Lenovo have no storage target support HW iSCSI and FCoE under ESXi 7.x

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

|---|---|---|---|---|---|

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | N | Y | Y | Y |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.8 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.9 | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y 1 | Y 1 | Y 1 | Y 1 |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y 1 | Y 1 | Y 1 | Y 1 |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y 2 | Y | Y 2 |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y |

1 Need out of box driver to support iSCSI and FCoE function

2 Need out of box driver to support FCoE and iSCSI feature

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

|---|---|---|---|---|---|

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.8 | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.9 | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y |

Warranty

The adapters have a 1-year, customer-replaceable unit (CRU) limited warranty. When installed in a server, these adapters assume the server’s base warranty and any warranty upgrade purchased for the server.

Physical specifications

The adapters have the following dimensions and weight:

- Width: 100 mm (3.9 in.)

- Depth: 80 mm (3.1 in.)

- Weight: 13 g (0.3 lb)

The adapters have the following shipping dimensions and weight (approximate):

- Height: 58 mm (2.3 in.)

- Width: 229 mm (9.0 in.)

- Depth: 208 mm (8.2 in.)

- Weight: 0.4 kg (0.89 lb)

Regulatory compliance

The adapters conform to the following regulatory standards:

- United States FCC 47 CFR Part 15, Subpart B, ANSI C63.4 (2003), Class A

- United States UL 60950-1, Second Edition

- IEC/EN 60950-1, Second Edition

- FCC - Verified to comply with Part 15 of the FCC Rules, Class A

- Canada ICES-003, issue 4, Class A

- UL/IEC 60950-1

- CSA C22.2 No. 60950-1-03

- Japan VCCI, Class A

- Australia/New Zealand AS/NZS CISPR 22:2006, Class A

- IEC 60950-1(CB Certificate and CB Test Report)

- Taiwan BSMI CNS13438, Class A

- Korea KN22, Class A; KN24

- Russia/GOST ME01, IEC-60950-1, GOST R 51318.22-99, GOST R 51318.24-99, GOST R 51317.3.2-2006, GOST R 51317.3.3-99

- IEC 60950-1 (CB Certificate and CB Test Report)

- CE Mark (EN55022 Class A, EN60950-1, EN55024, EN61000-3-2, EN61000-3-3)

- CISPR 22, Class A

Related publications and links

For more information, see the following resources:

- Product Guide for the Lenovo ThinkSystem NE2552E Flex Switch

http://lenovopress.com/LP0854 - Datasheet for the Lenovo ThinkSystem NE2552E Flex Switch

http://lenovopress.com/DS0040 - 3D Interactive Tour for the Lenovo ThinkSystem NE2552E Flex Switch

http://lenovopress.com/LP0871 - Lenovo Press product guides for Flex System I/O modules

https://lenovopress.com/servers/blades/networkmodule - Lenovo Press product guides for Flex System compute nodes

https://lenovopress.com/servers/blades/server - Flex System Information Center (User's Guides for servers and options)

http://flexsystem.lenovofiles.com/help/index.jsp - Flex System Interoperability Guide

http://lenovopress.com/fsig - Flex System Products and Technology

http://lenovopress.com/sg248255 - ServerProven

http://www.lenovo.com/us/en/serverproven

Related product families

Product families related to this document are the following:

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

Flex System

ServerProven®

ThinkSystem®

XClarity®

The following terms are trademarks of other companies:

Xeon® is a trademark of Intel Corporation or its subsidiaries.

Linux® is the trademark of Linus Torvalds in the U.S. and other countries.

Microsoft®, Hyper-V®, PowerShell, Windows PowerShell®, Windows Server®, and Windows® are trademarks of Microsoft Corporation in the United States, other countries, or both.

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Changes in the June 15, 2023 update:

- The following adapter is withdrawn:

- ThinkSystem QLogic QL45214 Flex 25Gb 4-Port Ethernet Adapter, 7XC7A05844

Changes in the July 28, 2022 update:

- Updated the OS support information - Operating system support section

Changes in the May 31, 2018 update:

- XClarity Administrator support is planned for 3Q/2018

First published: 7 May 2018