Author

Updated

31 Aug 2022Form Number

LP0054PDF size

21 pages, 429 KBAbstract

The CN4054S 4-port and CN4052S 2-port 10Gb Virtual Fabric Adapters are VFA5.2 adapters that are supported on ThinkSystem and Flex System compute nodes.

This product guide provides essential presales information to understand the CN4054S and CN4052S adapters and their key features, specifications, and compatibility. This guide is intended for technical specialists, sales specialists, sales engineers, IT architects, and other IT professionals who want to learn more about the adapters and consider their use in a Flex System solution.

Change History

Changes in the August 31, 2022 update:

- The following adapter is withdrawn:

- Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced, 01CV790

Learn about the adapters with David Watts and Hemal Purohit

Introduction

The CN4054S 4-port and CN4052S 2-port 10Gb Virtual Fabric Adapters are VFA5.2 adapters that are supported on ThinkSystem and Flex System compute nodes.

The CN4052S can be divided into up to eight virtual NIC (vNIC) devices per port (for a total of 16 vNICs) and the CN4054S can be divided in to four vNICs (for a total of 16 vNICs). Each vNIC can have flexible bandwidth allocation. These adapters also feature RDMA over Converged Ethernet (RoCE) capability, and support iSCSI, and FCoE protocols, either as standard or with the addition of a Features on Demand (FoD) license upgrade.

The adapters are shown in the following figure. The CN4054S and CN4052S look the same.

Figure 1. Flex System CN4054S and CN4052S 10Gb Virtual Fabric Adapters

Did you know?

The CN4054S and CN4052S are based on the new Emulex XE100-P2 "Skyhawk P2" ASIC which enables better performance, especially with the new RDMA over Converged Ethernet v2 (RoCE v2) support. In addition, these adapters are supported by Lenovo XClarity Administrator, which allows you to deploy adapter settings easier and incorporate the adapters in configuration patterns.

The CN4052S adapter now supports 8 vNICs per port using UFP or vNIC2 and with adapter firmware 10.6 or later. This means a total of 16 vNICs are supported. The CN4054S still supports 4 vNICs per port.

Part number information

The part numbers to order the adapters are listed in the following table.

The software upgrade licenses listed are only for Flex System compute nodes, not ThinkSystem compute nodes. The licenses are Features on Demand field license upgrades that enable the FCoE and iSCSI converged networking capabilities of the supported adapters. Adapters 01CV780 and 01CV790 already have these features enabled and do not need the FoD upgrades.

| Part number | Feature code | Description |

|---|---|---|

| Adapters with FCoE and iSCSI standard | ||

| 01CV780 | AU7X | Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter Advanced |

| 01CV790 | AU7Y | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced |

| Adapters without FCoE and iSCSI (optional for Flex System compute nodes) | ||

| 00AG540 | ATBT | Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter |

| 00AG590 | ATBS | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter |

| Features on Demand upgrades - not supported with ThinkSystem compute nodes | ||

| 00JY804 | A5RV | Flex System CN4052 Virtual Fabric Adapter SW Upgrade (FoD) |

| 00AG594 | ATBU | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter SW Upgrade (FoD) |

Features

The CN4054S 4-port 10Gb Virtual Fabric Adapter and CN4052S 2-port 10Gb Virtual Fabric Adapter, which are part of the VFA5.2 family of System x and Flex System adapters, reduce cost by enabling a converged infrastructure and improve performance with powerful offload engines. The adapters have the following features and benefits:

- Multiprotocol support for 10 GbE

The adapters offer two (CN4052S) or four (CN4054S) 10 GbE connections and are cost- and performance-optimized for integrated and converged infrastructures. They offer a “triple play” of converged data, storage, and low latency RDMA networking on a common Ethernet fabric. The adapters provides customers with a flexible storage protocol option for running heterogeneous workloads on their increasingly converged infrastructures.

- Virtual NIC emulation

The Emulex VFA5.2 family supports three NIC virtualization modes as standard: Virtual Fabric mode (vNIC1), switch independent mode (vNIC2), and Unified Fabric Port (UFP). With NIC virtualization, each of the physical ports on the adapter can be logically configured to emulate up to four or eight virtual NIC (vNIC) functions with user-definable bandwidth settings. With UFP or vNIC2, the CN4052S supports eight vNICs per port. Both adapters support four vNICs per port with vNIC1 and vNIC2. Additionally, each physical port can simultaneously support a storage protocol (FCoE or iSCSI).

- Full hardware storage offloads

These adapters support an FCoE hardware offload engine, which is either standard with the adapter or optionally enabled via Lenovo Features on Demand (FoD), depending on the adapter selected. The offload engine accelerates storage protocol processing and delivers up to 1.5 million I/O operations per second (IOPS). This enables the server’s processing resources to focus on applications and improves the server’s overall performance.

- Lenovo Features on Demand (not supported with ThinkSystem compute nodes)

Adapters 00AG540 and 00AG590 use Features on Demand (FoD) software activation technology to enable additional features on Flex System compute nodes (not supported with ThinkSystem compute nodes). FoD enables the adapters to be initially deployed as low-cost Ethernet NICs, and later upgraded in the field to support FCoE or iSCSI hardware offload. Adapters 00AG540 and 00AG590 use FoD to enable FCoE and iSCSI, whereas adapters 01CV780 and 01CV790 already have these features enabled and do not use Features on Demand.

- Emulex Virtual Network Acceleration (VNeX) technology support

Emulex VNeX supports Microsoft network virtualization by using generic routing encapsulation (NVGRE) and VMware-supported virtual extensible LAN (VXLAN). These technologies create more logical LANs for traffic isolation that are needed by cloud architectures. Because these protocols increase the processing burden on the server’s processor, the adapters have an offload engine that is designed specifically for processing these tags. The resulting benefit is that cloud providers can use the benefits of VxLAN/NVGRE with no reduction in the server’s performance.

- Advanced RDMA capabilities with RoCE v2

The XE100-P2 controllers support the new InfiniBand Trade Association (IBTA) RDMA over Converged Ethernet v2 (RoCE v2) specification for Layer 3 routing. RoCE v2 delivers application and storage acceleration through faster I/O operations and support for Windows Server SMB Direct and Linux NFS protocols.

The XE100-P2 controllers leverage RoCE v2, enabling server-to-server data movement directly between application memory, without any CPU involvement. This provides high throughput and data acceleration on a standard Ethernet fabric, without the need for specialized infrastructure or management.

RoCE also helps accelerate workloads in the following ways:

- Capability to deliver a 4x boost in small packet network performance vs. previous generation adapters, which is critical for transaction-heavy workloads

- Desktop-like experiences for VDI with up to 1.5 million FCoE or iSCSI I/O operations per second (IOPS)

- Ability to scale Microsoft SQL Server, Exchange, and SharePoint using SMB Direct optimized with RoCE

- More VDI desktops per server, due to up to 18% higher CPU effectiveness (the percentage of server CPU utilization for every 1 Mbps I/O throughput)

- Superior application performance for VMware hybrid cloud workloads, with up to 129% higher I/O throughput compared to adapters without offload

- Lenovo XClarity support

The Emulex VFA5.2 family offers improved support for Lenovo XClarity:

- Supports agent-free management, eliminating platform agents to reduce complexity

- Supports automatic inventory management to simplify management

- Supports real-time monitoring, fault detection and alert handling for a more secure managed environment

- Supports automatic firmware downloads and installation for true bare metal provisioning

- Deploy faster and manage less when multiple Emulex Virtual Fabric adapters (VFAs) and Host Bus Adapters (HBAs) are combined

VFAs and HBAs that are developed by Emulex use the same installation and configuration process, which streamlines the effort to get your server running and saves you valuable time. They also use the same Fibre Channel drivers, which reduces the time needed to qualify and manage storage connectivity. With Emulex's OneCommand Manager, you can manage multiple Emulex VFAs and HBAs from a single console.

Specifications

The Flex System CN4054S 4-port and CN4052S 2-port 10Gb Virtual Fabric Adapters have the following specifications:

- Four (CN4054S) or two (CN4052S) 10 Gb Ethernet ports

- Based on the Emulex XE100-P2 series design:

- CN4054S: Single XE104-P2 ASIC

- CN4052S: Single XE102-P2 ASIC

- PCIe Express 3.0 x8 host interface

- vNIC (NIC partition) capability

- MSI-X support

- Lenovo XClarity Administrator support

- Power consumption: 25 W maximum

Virtualization features

- vNIC partitions with UFP mode

- CN4052S: 8 NIC partitions per port, 16 total*

- CN4054S: 4 NIC partitions per port, 16 total

- vNIC partitions with vNIC2 mode:

- CN4052S: 8 NIC partitions per port, 16 total*

- CN4054S: 4 NIC partitions per port, 16 total

- vNIC partitions with vNIC1 mode:

- CN4052S: 4 NIC partitions per port, 8 total

- CN4054S: 4 NIC partitions per port, 16 total

- Complies with PCI-SIG specification for SR-IOV

- VXLAN/NVGRE encapsulation and offload

- PCI-SIG Address Translation Service (ATS) v1.0

- Virtual Switch Port Mirroring for Diagnostic purposes

- Virtual Ethernet Bridge (VEB)

- 62 Virtual functions (VF)

- QoS for controlling and monitoring bandwidth that is assigned to and used by virtual entities

- Traffic shaping and QoS across each virtual function (VF) and physical function (PF)

- Message Signal Interrupts (MSI-X) support

- VMware NetQue / VMQ support

- Microsoft VMQ & Dynamic VMQ support in Hyper-V

* 8 NIC partitions per port requires adapter firmware 10.6 or later; UFP mode also requires switch firmware 8.3 or later

Ethernet and NIC features

- NDIS 5.2, 6.0, and 6.2 compliant Ethernet functionality

- IPv4/IPv6 TCP, UDP, and Checksum Offload

- IPv4/IPv6 TCP, UDP Receive Side Scaling (RSS)

- IPv4/IPv6 Large Send Offload (LSO)

- Programmable MAC addresses

- 128 MAC/vLAN addresses per port

- Support for HASH-based broadcast frame filters per port

- vLAN insertion and extraction

- 9216 byte Jumbo frame support

- Receive Side Scaling (RSS)

- Filters: MAC and vLAN

- PXE Boot support

Remote Direct Memory Access (RDMA):

- Direct data placement in application buffers without processor intervention

- Supports RDMA over converged Ethernet (RoCE) specifications

- Supports RoCE v2 by replacing RoCE over IP with RoCE over UDP

- 20% more RoCE Queue Pairs than VFA5

- 16K RoCE Memory Regions (VFA5 offers 8K)

- Linux Opens Fabrics Enterprise Distribution (OFED) Support

- Low latency queues for small packet sends and receives

- Local interprocess communication option by internal VEB switch

- TCP/IP Stack By-Pass

Data Center Bridging / Converged Enhanced Ethernet (DCB/CEE):

- Hardware Offloads of Ethernet TCP/IP

- 802.1Qbb Priority Flow Control (PFC)

- 802.1 Qaz Enhanced Transmission Selection (ETS)

- 802.1 Qaz Data Center Bridging Exchange (DCBX)

Fibre Channel over Ethernet (FCoE) offload:

- Availability:

- Standard feature with 01CV780 and 01CV790

- Optional feature with 00AG540 and 00AG590 via a Features on Demand upgrade, only supported with Flex System servers (not supported with ThinkSystem compute nodes)

- Hardware offloads of Ethernet TCP/IP

- ANSI T11 FC-BB-5 Support

- Programmable Worldwide Name (WWN)

- Support for FIP and FCoE Ether Types

- Supports up to 255 NPIV Interfaces per port

- FCoE Initiator

- Common driver for Emulex Universal CNA and Fibre Channel HBAs

- 255 N_Port ID Virtualization (NPIV) interfaces per port

- Fabric Provided MAC Addressing (FPMA) support

- Up to 4096 concurrent port logins (RPIs) per port

- Up to 2048 active exchanges (XRIs) per port

- FCoE Boot support

iSCSI offload:

- Availability:

- Standard feature with 01CV780 and 01CV790

- Optional feature with 00AG540 and 00AG590 via a Features on Demand upgrade, only supported with Flex System servers (not supported with ThinkSystem compute nodes)

- Full iSCSI Protocol Offload

- Header, Data Digest (CRC), and PDU

- Direct data placement of SCSI data

- 2048 Offloaded iSCSI connections

- iSCSI initiator and concurrent initiator /target modes

- Multipath I/O

- OS-neutral INT13 based iSCSIboot and iSCSI crash memory dump support

- RFC 4171 Internet Storage Name Service (iSNS)

- iSCSI Boot support

IEEE Standards supported:

- PCI Express base spec 2.0, PCI Bus Power Management Interface, rev. 1.2, Advanced Error Reporting (AER)

- 802.3-2008 10Gbase Ethernet port

- 802.1Q vLAN

- 802.3x Flow Control with pause Frames

- 802.1 Qbg Edge Virtual Bridging

- 802.1Qaz Enhanced transmission Selection (ETS) Data Center Bridging Capability (DCBX)

- 802.1Qbb Priority Flow Control

- 802.3ad link Aggregation/LACP

- 802.1ab Link Layer Discovery Protocol

- 802.3ae (SR Optics)

- 802.1AX (Link Aggregation)

- 802.3p (Priority of Service)

- 802.1Qau (Congestion Notification)

- IPV4 (RFQ 791)

- IPV6 (RFC 2460)

Modes of operation

The CN4054S and CN4052S support the following vNIC modes of operation (plus pNIC mode):

- Virtual Fabric Mode (also known as vNIC1 mode). This mode works only with the following switches that are installed in the chassis:

- Flex System Fabric CN4093 10Gb Converged Scalable Switch

- Flex System Fabric EN4093R 10Gb Scalable Switch

- Flex System Fabric EN4093 10Gb Scalable Switch

In this mode, the adapter communicates using DCBX with the switch module to obtain vNIC parameters. Also, a special tag within each data packet is added and later removed by the NIC and switch for each vNIC group, to maintain separation of the virtual channels.

In vNIC mode, each physical port is divided into four virtual ports, which provides a total of 16 (CN4054S) or eight (CN4052S) virtual NICs per adapter. The default bandwidth for each vNIC is 2.5 Gbps. Bandwidth for each vNIC can be configured at the switch—from 100 Mbps to 10 Gbps—up to a total of 10 Gbps per physical port. The vNICs can also be configured to have zero bandwidth if you want to allocate the available bandwidth among fewer than eight vNICs. In Virtual Fabric Mode, you can change the bandwidth allocations through the switch user interfaces without requiring a reboot of the server.

When storage protocols are enabled on the adapter (enabled by default or with the appropriate FoD upgrade, as listed in Part number information), one vNIC in each port is for storage protocols. For the CN4054S, four vNICs are used for FCoE/iSCSI (leaving 12 for Ethernet), and for the CN4052S, two vNICs are used for FCoE/iSCSI (leaving six for Ethernet).

- Switch Independent Mode (also known as vNIC2 mode), where the adapter works with the following switches:

- Cisco Nexus B22 Fabric Extender for Flex System

- Flex System EN4023 10Gb Scalable Switch

- Flex System Fabric CN4093 10Gb Converged Scalable Switch

- Flex System Fabric EN4093R 10Gb Scalable Switch

- Flex System Fabric EN4093 10Gb Scalable Switch

- Flex System Fabric SI4091 System Interconnect Module

- Flex System Fabric SI4093 System Interconnect Module

- Flex System EN4091 10Gb Ethernet Pass-thru and a top-of-rack (TOR) switch

Switch Independent Mode allows the splitting up a single 10 Gb port into eight virtual NICs (CN4052S) or four virtual NICs (CN4054S). Switch Independent Mode extends the existing customer VLANs to the virtual NIC interfaces. The IEEE 802.1Q VLAN tag is essential to the separation of the vNIC groups by the NIC adapter or driver and the switch. The VLAN tags are added to the packet by the applications or drivers at each end station rather than by the switch.

Note: The use of 8 vNIC partitions with the CN4052S requires adapter firmware 10.6 or later and switch firmware 8.3 or later. With earlier firmware, Switch Independent Mode support is limited to 4 virtual NICs per adapter port.

- Unified Fabric Port (UFP) provides a feature-rich solution compared to the original vNIC Virtual Fabric mode. UFP allows the splitting up a single 10 Gb port into eight virtual NICs (CN4052S) or four virtual NICs (CN4054S), called vPorts in UFP. UFP also has the following modes that are associated with it:

- Tunnel mode: Provides Q-in-Q mode, where the vPort is customer VLAN-independent (very similar to vNIC Virtual Fabric Dedicated Uplink Mode).

- Trunk mode: Provides a traditional 802.1Q trunk mode (multi-VLAN trunk link) to the virtual NIC (vPort) interface; that is. permits host side tagging.

- Access mode: Provides a traditional access mode (single untagged VLAN) to the virtual NIC (vPort) interface, which is similar to a physical port in access mode.

- FCoE mode: Provides FCoE functionality to the vPort.

- Auto-VLAN mode: Auto VLAN creation for Qbg and VMready environments.

Only one vPort (vPort 2) per physical port can be bound to FCoE. If FCoE is not wanted, vPort 2 can be configured for one of the other modes.

UFP works with the following switches:

- Flex System Fabric CN4093 10Gb Converged Scalable Switch

- Flex System Fabric EN4093R 10Gb Scalable Switch

- Flex System Fabric SI4093 System Interconnect Module

Note: The use of 8 vNIC partitions with the CN4052S requires adapter firmware 10.6 or later and switch firmware 8.3 or later. With earlier firmware, UFP support is limited to 4 virtual NICs per adapter port.

- In pNIC mode, the adapter can operate as a standard 10 Gbps or 1 Gbps 4-port Ethernet expansion card. When in pNIC mode, the adapter functions with all supported I/O modules.

In pNIC mode, an adapter with the FoD upgrade applied operates in traditional Converged Network Adapter (CNA) mode with four ports (CN4054S) or two ports (CN4052S) of Ethernet and four ports (CN4054S) or two ports (CN4052S) of iSCSI or FCoE available to the operating system.

Server support

The following table lists the ThinkSystem and Flex System compute nodes that support the adapters.

| Part number |

Description |

x240 (8737, E5-2600 v2)

|

x240 (7162)

|

x240 M5 (9532, E5-2600 v3)

|

x240 M5 (9532, E5-2600 v4)

|

x440 (7167)

|

x880/x480/x280 X6 (7903)

|

x280/x480/x880 X6 (7196)

|

SN550 (7X16)

|

SN850 (7X15)

|

SN550 V2 (7Z69)

|

|---|---|---|---|---|---|---|---|---|---|---|---|

| Adapters - ThinkSystem and Flex System compute nodes | |||||||||||

| 01CV780 | Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter Advanced | N | N | Y | Y | Y | Y | Y | Y | Y | Y |

| 01CV790 | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced | N | N | Y | Y | Y | Y | Y | Y | Y | Y |

| 00AG540 | Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter | N | N | Y | Y | Y | N | Y | Y | Y | Y |

| 00AG590 | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Features on Demand upgrades - Flex System compute nodes only | |||||||||||

| 00JY804 | Flex System CN4052 Virtual Fabric Adapter SW Upgrade (FoD) | Y | Y | Y | Y | Y | Y | Y | N | N | N |

| 00AG594 | Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter SW Upgrade (FoD) | Y | Y | Y | Y | Y | Y | Y | N | N | N |

I/O module support

These adapters can be installed in any I/O adapter slot of a supported Flex System compute node. One or two compatible 1 Gb or 10 Gb I/O modules must be installed in the corresponding I/O bays in the chassis. The following table lists the switches that are supported. When connected to the 1 Gb switch, the adapter will operate at 1 Gb speeds. When connected to the 40 Gb switch, the adapter will operate at 10 Gb speeds.

To maximize the number of adapter ports usable, you may also need to order switch upgrades to enable additional ports. Alternatively, for CN4093, EN4093R, and SI4093 switches, you can use Flexible Port Mapping (FPM), a feature of Networking OS 7.8 or later, that allows you to minimize the number of upgrades needed.

See the Product Guides for the Flex System switches for more details about switch upgrades and FPM:

https://lenovopress.com/servers/blades/networkmodule

The table below specifies how many ports the adapters contain. For the CN4054S, to enable all 4 adapter ports, either upgrade the switch or use Flexible Port Mapping. Switches should be installed in pairs to maximize the number of ports enabled and to provide redundant network connections.

| Part number |

Description | CN4052S ports† |

CN4054S ports† |

|---|---|---|---|

| 4SG7A08868 | Lenovo ThinkSystem NE2552E Flex Switch | 2 | 4 |

| 00FM514 | Lenovo Flex System Fabric EN4093R 10Gb Scalable Switch | 2 | 4** |

| 00FM510 | Lenovo Flex System Fabric CN4093 10Gb Converged Scalable Switch | 2 | 4** |

| 00FE327 | Lenovo Flex System SI4091 10Gb System Interconnect Module | 2 | 2 |

| 00FM518 | Lenovo Flex System Fabric SI4093 System Interconnect Module | 2 | 4** |

| 90Y9346 | Flex System EN6131 40Gb Ethernet Switch | 2 | 2 |

| 88Y6043 | Flex System EN4091 10Gb Ethernet Pass-thru | 2 | 2 |

| 49Y4294 | Flex System EN2092 1Gb Ethernet Scalable Switch | 2 | 4** |

| 94Y5350 | Cisco Nexus B22 Fabric Extender for Flex System | 2 | 2 |

| 00D5823* | Flex System Fabric CN4093 10Gb Converged Scalable Switch | 2 | 4** |

| 95Y3309* | Flex System Fabric EN4093R 10Gb Scalable Switch | 2 | 4** |

| 49Y4270* | Flex System Fabric EN4093 10Gb Scalable Switch | 2 | 4** |

| 95Y3313* | Flex System Fabric SI4093 System Interconnect Module | 2 | 4** |

| 94Y5212* | Flex System EN4023 10Gb Scalable Switch | 2 | 4** |

* Withdrawn from marketing

† This is the number of adapter ports that will be enabled per adapter, and requires that two switches be installed in the chassis.

** The use of 4 ports will require either a switch upgrade to enable additional ports or the use of Flexible Port Mapping to reconfigure the active ports

The following table shows the connections between adapters installed in the compute nodes and the switch bays in the chassis.

| I/O adapter slot in the server |

Port on the adapter | Corresponding I/O module bay in the chassis |

| Slot 1 | Port 1 | Module bay 1 |

| Port 2 | Module bay 2 | |

| Port 3* | Module bay 1 | |

| Port 4* | Module bay 2 | |

| Slot 2 | Port 1 | Module bay 3 |

| Port 2 | Module bay 4 | |

| Port 3* | Module bay 3 | |

| Port 4* | Module bay 4 | |

| Slot 3 (full-wide compute nodes only) |

Port 1 | Module bay 1 |

| Port 2 | Module bay 2 | |

| Port 3* | Module bay 1 | |

| Port 4* | Module bay 2 | |

| Slot 4 (full-wide compute nodes only) |

Port 1 | Module bay 3 |

| Port 2 | Module bay 4 | |

| Port 3* | Module bay 3 | |

| Port 4* | Module bay 4 |

* Ports 3 and 4 (CN4054S only) require Upgrade 1 of the selected switch, where applicable. 14-port modules such as the EN4091 Pass-thru, SI4091 switch, and Cisco B22 only support ports 1 and 2 (and only when two I/O modules are installed).

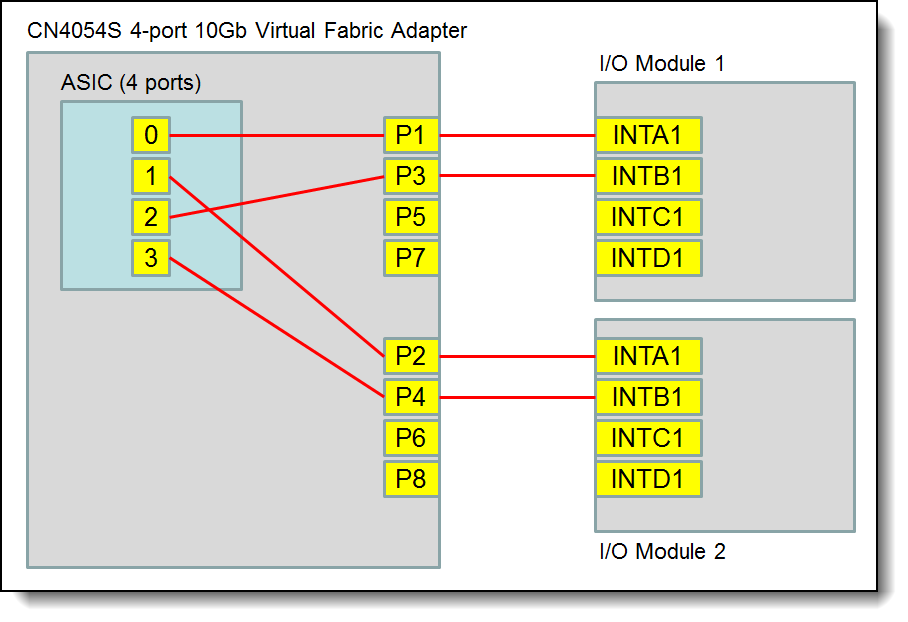

The following figure shows the internal layout of the CN4054S, with how the adapter ports are routed to the I/O module internal ports.

Note: INTD1 is not available on any currently shipping Flex System I/O modules.

Figure 2. Internal layout of the CN4054S adapter ports

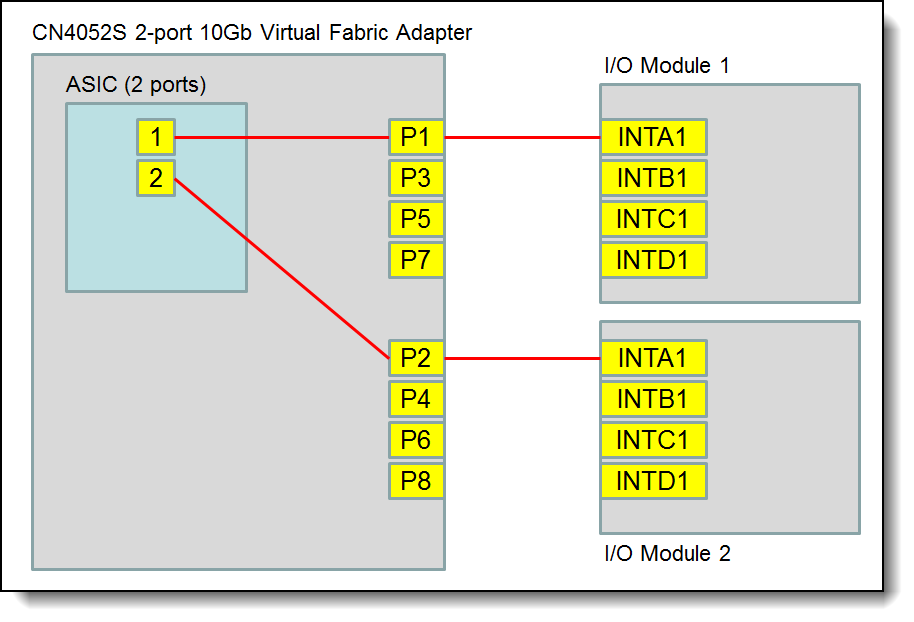

The following figure shows the internal layout of the CN4052S, and how the adapter ports are routed to the I/O module internal ports.

Note: INTD1 is not available on any currently shipping Flex System I/O modules.

Figure 3. Internal layout of the CN4052S adapter ports

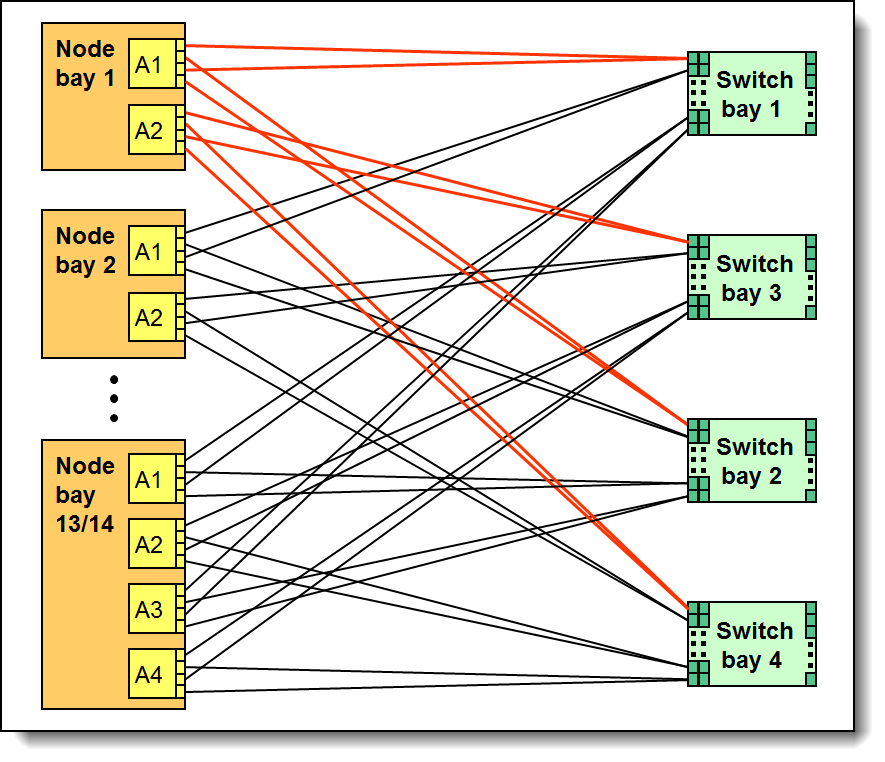

The connections between the adapters installed in the compute nodes to the switch bays in the chassis are shown diagrammatically in the following figure. The figure shows half-wide servers (such as the x240 M5 with two adapters) and full-wide servers (such as the x440 with four adapters).

Figure 4. Logical layout of the interconnects between I/O adapters and I/O modules

Operating system support

The following tables list operating system support:

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

x240 M5 (9532) |

x280/x480/x880 X6 (7196) |

x440 (7167) |

|---|---|---|---|---|---|---|---|---|

| Microsoft Windows Server 2012 | N | N | N | N | N | Y | N | Y |

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y | Y | N | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y | Y | N | Y |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y | Y | N | N |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y | N | N | N |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y | Y | Y | Y |

| Microsoft Windows Server version 1803 | N | N | N | Y | N | N | N | N |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y | N | N | N |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y | Y | N | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y | Y | N | Y |

| SUSE Linux Enterprise Server 11 for x86 | N | N | N | N | N | N | N | Y |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y | Y | Y | N |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y | Y | Y | N |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y | Y | Y | N |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y | Y | Y | N |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y | Y | Y | N |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 with Xen | Y | Y | Y | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y | Y | Y | N |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 5.5 | N | N | N | N | N | Y | N | Y |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y | Y | N | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 | N | N | N | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.7 | N | N | N | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y | N | N | N |

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

x240 (8737, E5 v2) |

x240 (7162) |

x240 M5 (9532) |

x280/x480/x880 X6 (7196) |

x280/x480/x880 X6 (7903) |

x440 (7167) |

x440 (7917) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Microsoft Windows Server 2012 | N | N | N | N | N | Y | Y | Y | N | Y | Y | Y |

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y | Y | Y | Y | N | Y | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y | Y | N | Y | N | Y | Y | N |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y | N | N | Y | N | N | N | N |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y | N | N | Y | Y | N | Y | N |

| Microsoft Windows Server version 1803 | N | N | N | Y | N | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 11 for x86 | N | N | N | N | N | N | Y | N | N | N | Y | N |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 with Xen | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y | N | N | Y | Y | N | N | N |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 5.5 | N | N | N | N | N | Y | Y | Y | N | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 | N | N | N | Y | Y | Y | N | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y | Y | N | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | Y | Y | Y | N | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y | Y | N | Y | Y | Y | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 | N | N | N | Y | Y | N | N | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y | N | N | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y | N | N | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y | N | N | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y | N | N | N | N | N | N | N |

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

x240 M5 (9532) |

x280/x480/x880 X6 (7196) |

x280/x480/x880 X6 (7903) |

x440 (7917) |

|---|---|---|---|---|---|---|---|---|---|

| Microsoft Windows Server 2012 | N | N | N | N | N | Y | Y | Y | Y |

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y | Y | Y | Y | N |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y | Y | N | N | N |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y | N | N | N | N |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y | Y | Y | N | N |

| Microsoft Windows Server version 1803 | N | N | N | Y | N | N | N | N | N |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 11 for x86 | N | N | N | N | N | N | Y | N | N |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y | Y | Y | N | N |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 5.5 | N | N | N | N | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 | N | N | N | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.7 | N | N | N | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y | N | N | N | N |

| Operating systems | SN550 V2 |

SN550 (Xeon Gen 2) |

SN850 (Xeon Gen 2) |

SN550 (Xeon Gen 1) |

SN850 (Xeon Gen 1) |

x240 M5 (9532) |

x280/x480/x880 X6 (7196) |

x280/x480/x880 X6 (7903) |

x440 (7917) |

|---|---|---|---|---|---|---|---|---|---|

| Microsoft Windows Server 2012 | N | N | N | N | N | Y | Y | Y | Y |

| Microsoft Windows Server 2012 R2 | N | N | N | Y | Y | Y | Y | Y | Y |

| Microsoft Windows Server 2016 | Y | Y | Y | Y | Y | Y | Y | Y | N |

| Microsoft Windows Server 2019 | Y | Y | Y | Y | Y | Y | N | N | N |

| Microsoft Windows Server 2022 | Y | Y | Y | Y | Y | N | N | N | N |

| Microsoft Windows Server version 1709 | N | N | N | Y | Y | Y | Y | N | N |

| Microsoft Windows Server version 1803 | N | N | N | Y | N | N | N | N | N |

| Red Hat Enterprise Linux 6.10 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 6.9 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.3 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.4 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.5 | N | N | N | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.6 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.7 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.8 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 7.9 | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| Red Hat Enterprise Linux 8.0 | N | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.1 | N | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.2 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.3 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.4 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.5 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.6 | Y | Y | Y | Y | Y | N | N | N | N |

| Red Hat Enterprise Linux 8.7 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 11 SP4 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 11 SP4 with Xen | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 11 for x86 | N | N | N | N | N | N | Y | N | N |

| SUSE Linux Enterprise Server 12 SP2 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP2 with Xen | N | N | N | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 | N | N | N | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP3 with Xen | N | N | N | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 | N | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP4 with Xen | N | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 | Y | Y | Y | Y | Y | Y | Y | Y | Y |

| SUSE Linux Enterprise Server 12 SP5 with Xen | Y | Y | Y | Y | Y | N | Y | Y | Y |

| SUSE Linux Enterprise Server 15 | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP1 | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP1 with Xen | N | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP2 | Y | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP2 with Xen | Y | Y | Y | Y | Y | Y | Y | N | N |

| SUSE Linux Enterprise Server 15 SP3 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP3 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP4 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 SP5 with Xen | Y | Y | Y | Y | Y | N | N | N | N |

| SUSE Linux Enterprise Server 15 with Xen | N | Y | Y | Y | Y | Y | Y | N | N |

| Ubuntu 18.04.5 LTS | Y | N | N | N | N | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 5.5 | N | N | N | N | N | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.0 U3 | N | N | N | Y | Y | Y | Y | Y | Y |

| VMware vSphere Hypervisor (ESXi) 6.5 | N | N | N | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U1 | N | N | N | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U2 | N | Y | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.5 U3 | N | Y | Y | Y | Y | Y | Y | Y | N |

| VMware vSphere Hypervisor (ESXi) 6.7 | N | N | N | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U1 | N | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U2 | N | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 6.7 U3 | Y | Y | Y | Y | Y | Y | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 | N | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U1 | N | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U2 | Y | Y | Y | Y | Y | N | N | N | N |

| VMware vSphere Hypervisor (ESXi) 7.0 U3 | Y | Y | Y | Y | Y | N | N | N | N |

Warranty

The Flex System CN4054S 4-port and CN4052S 2-port 10Gb Virtual Fabric Adapters have a 1-year, customer-replaceable unit (CRU) limited warranty. When installed in a compute node, these adapters assume the system’s base warranty and any warranty upgrade purchased for the system.

Physical specifications

The adapter features the following dimensions and weight:

- Width: 100 mm (3.9 in.)

- Depth: 80 mm (3.1 in.)

- Weight: 13 g (0.3 lb)

The adapter features the following shipping dimensions and weight (approximate):

- Height: 58 mm (2.3 in.)

- Width: 229 mm (9.0 in.)

- Depth: 208 mm (8.2 in.)

- Weight: 0.4 kg (0.89 lb)

Regulatory compliance

The adapter conforms to the following regulatory standards:

- United States FCC 47 CFR Part 15, Subpart B, ANSI C63.4 (2003), Class A

- United States UL 60950-1, Second Edition

- IEC/EN 60950-1, Second Edition

- FCC - Verified to comply with Part 15 of the FCC Rules, Class A

- Canada ICES-003, issue 4, Class A

- UL/IEC 60950-1

- CSA C22.2 No. 60950-1-03

- Japan VCCI, Class A

- Australia/New Zealand AS/NZS CISPR 22:2006, Class A

- IEC 60950-1(CB Certificate and CB Test Report)

- Taiwan BSMI CNS13438, Class A

- Korea KN22, Class A; KN24

- Russia/GOST ME01, IEC-60950-1, GOST R 51318.22-99, GOST R 51318.24-99, GOST R 51317.3.2-2006, GOST R 51317.3.3-99

- IEC 60950-1 (CB Certificate and CB Test Report)

- CE Mark (EN55022 Class A, EN60950-1, EN55024, EN61000-3-2, EN61000-3-3)

- CISPR 22, Class A

Related publications and links

For more information, see the following resources:

- Networking options for ThinkSystem servers

https://lenovopress.com/lp0765-networking-options-for-thinksystem-servers - Lenovo Press product guides for Flex System I/O modules

https://lenovopress.com/servers/blades/networkmodule - Lenovo Press product guides for Flex System compute nodes

https://lenovopress.com/servers/blades/server - Flex System Information Center (User's Guides for servers and options)

http://flexsystem.lenovofiles.com/help/index.jsp - Flex System Interoperability Guide

http://lenovopress.com/fsig - Flex System Products and Technology

http://lenovopress.com/sg248255 - ServerProven

http://www.lenovo.com/us/en/serverproven

Related product families

Product families related to this document are the following:

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

Flex System

ServerProven®

System x®

ThinkSystem®

VMready®

XClarity®

The following terms are trademarks of other companies:

Xeon® is a trademark of Intel Corporation or its subsidiaries.

Linux® is the trademark of Linus Torvalds in the U.S. and other countries.

Microsoft®, Hyper-V®, SQL Server®, SharePoint®, Windows Server®, and Windows® are trademarks of Microsoft Corporation in the United States, other countries, or both.

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Changes in the August 31, 2022 update:

- The following adapter is withdrawn:

- Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced, 01CV790

Changes in the July 2, 2021 update:

- The following adapters are now available again:

- Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter, 00AG540

- Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter, 00AG590

- Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter Advanced, 01CV780

- Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced, 01CV790

Changes in the June 29, 2021 update:

- The following adapters are now available again:

- Flex System CN4052S 2-port 10Gb Virtual Fabric Adapter, 00AG540

- Flex System CN4054S 4-port 10Gb Virtual Fabric Adapter, 00AG590

- Added the operating system support tables - Operating system support section

Changes in the June 1, 2021 update:

- All adapters are now withdrawn from marketing

Changes in the February 8, 2019 update:

- Updated the server support table - Server support section

- CN4052S 2-port 10Gb Virtual Fabric Adapter Advanced (01CV780) is supported on x880/x480/x280 X6 (7903)

- CN4054S 4-port 10Gb Virtual Fabric Adapter Advanced (01CV790) is supported on x880/x480/x280 X6 (7903)

- CN4054S 4-port 10Gb Virtual Fabric Adapter (00AG590) is supported on x240 (7162) and x880/x480/x280 X6 (7903)

- CN4052 Virtual Fabric Adapter SW Upgrade (FoD) is supported on x880/x480/x280 X6 (7903)

- CN4054S 4-port 10Gb Virtual Fabric Adapter SW Upgrade (FoD) (00AG594) is supported on x240 (7162) and x880/x480/x280 X6 (7903)

Changes in the November 8 & 9, 2018 update:

- Updated the server support table - Server support section

- Updated the list of supported operating systems - Operating system support section

- Clarified that INTD1 ports are not available on Lenovo switches currently available - I/O module support section

Changes in the January 4, 2018 update:

- The CN4054S 4-port 10Gb Virtual Fabric Adapter is supported in the x240 (8737, v2)

Changes in the March 21, 2017 update:

- The CN4052S and CN4054S support IEEE 802.1Qau (Congestion Notification)

Changes in the December 5, 2016 update:

- The CN4054S is now supported in the x240 (8737, E5 v2) and x240 (7162)

Changes in the December 18, 2015 update:

- Clarified support for PXE Boot, FCoE Boot, and iSCSI Boot

Changes in the December 1, 2015 update:

- Corrected Figure 3, the port mapping diagram for the CN4054S adapter

First published: 24 November 2015